Socialism & AI: Beyond the Threat to Labour

by Imogen Xavier

As socialists fundamentally striving for a society that does away with the need for surplus labour, automation can be a utopian prospect. This popular vision of so called “fully automated luxury communism” presents a scenario in which it is easy to envision generative AI as a useful and productive tool that erases white-collar administrative work. It is tempting then to see gen-AI as yet another technology ill-employed by capitalists, like smartphones and computers before. There are however important differences between these technologies on which grounds gen-AI as it exists today should be completely disallowed and disconnected from in the modern socialist movement. The reasons for prohibition of conventional gen-AI fall into economic, ethical, and philosophical categories. Within each of these, are myriad reasons for one to firmly oppose the work of the tech sector and AI industry, from a marxist perspective - the replacement of jobs actually being the least of our concerns.

It is important first to define our terms. Generative AI, or Large Language Model software, is contrary to the name not in any way sapient. These programs produce text by procedurally calculating the most statistically likely word in a chain. It’s the same technology as predictive text on your phone keyboard, only using more contextual information in the predictive equation. The result is coherent output that plausibly simulates sapience - it is however only math, not reasoning. This is why gen-AI is prone to errors. It is presenting the statistically most likely string of words. This produces falsehoods and fabrications known as “hallucinations.”

Generative AI is being most commonly deployed for the following tasks at which it is “good”:

Summarization (though the error rate requires its work to be checked)

Writing code (though creates systems that engineers did not design and thus do not understand)

Writing text/chatbot work (though it does not understand story/argument/problem solving and writes with a bland, monotonous syntax)

Sifting large amounts of data (see again error rate, as well as malicious uses)

Creating photoreal/deepfake images and video (thrusting us into an age in which we can trust the veracity of nothing we see online)

Economic:

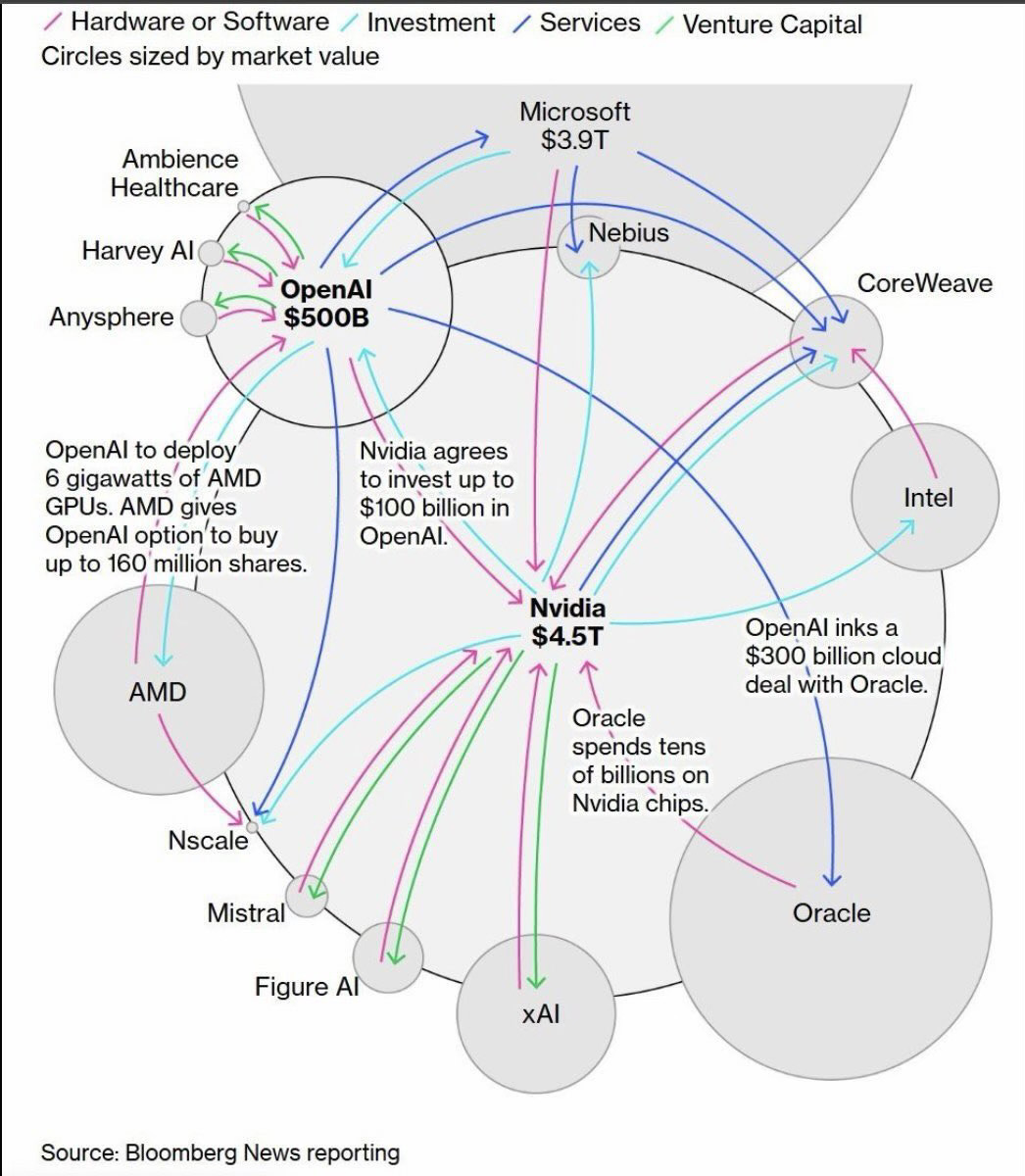

Chief among the economic category of issues is the financial bubble and its quasi-religious origins. Peter Thiel, Elon Musk, Sam Altman and the other billionaires behind the tech sector have devoted a huge amount of their public facing attention to AI myth building. They claim that this technology has either utopian or apocalyptic possibilities, invoking the same moral authority rhetoric used to justify new world colonization: “if we don’t do it, the wrong people will.” The gods-and-demons version of future AI they’re describing though is not what we have today. The promise they’re making is AGI or Artificial General Intelligence - the type of sentient supercomputers depicted in science fiction. All professional accounts outside of the tech sector indicate that the development of AGI is impossible. Neuroscientists, psychologists, and cognitive philosophers have for centuries sought to understand the functioning of the human brain, and to this day remain empty handed. Without a working model of the human mind, developing AGI is a fiction. The tech sector is persuasive though, and their storytelling has attracted unprecedentedly massive amounts of capital from government contracts and private firms. This money is being passed around, primarily between NVDIA, Microsoft, Alphabet, and OpenAI, in the form of investment, hardware, and services (see the Bloomberg Bubble Graphic.) The movement of huge sums is an illusion of growth for a technology with no real demand. None of the major AI developers are making money off their products. The most successful of the bunch, Microsoft’s Copilot, loses $8 a month for every active subscription. Corporate contracts, rather than consumers, are the demographic that is shelling out. Gen-AI “agents” are replacing coders, artists, and customer service operators while doing a worse job of each task. Of course the caliber of the work doesn’t bother the corporate oligopolies. Free from the burden of providing quality goods and services, corporations are happy to pad their revenue with growth-by-shinkage, cutting operating costs safe in the knowledge that captive markets have nowhere else to turn.

The more outwardly optimistic industry leaders are pitching international governments on a utopian neo-georgist view of the future, in which AI powered automation funds Universal Basic Income programs through stakeholdership. That’s where the system disruption ends however, without redistribution or democratization. Critics on the left speculate a truer goal of this pitch is to keep governments (specifically US congress) focused on an unachievable legislative goal to stave off regulation in the short term, and keep the bubble alive. Either way, tech CEO’s drinking the Kool-Aid intend to have gen-AI facilitate a technofeudal alienation of workers removing us further from the products we produce and from our systems of democracy, so as to consolidate their own capital and power. Similarly, the “cloud computing conspiracy” refers to another dual-possibility undermining of workers’ power. Much of the money in the tech sector is being dedicated to the construction of data centers. In the unlikely world where gen-AI finds a real long term foothold, these hubs will precipitate deeper intrusion of gen-AI into more facets of society. In the much more likely world where the bubble collapses, these data centers will be repurposed for another tech sector agenda item: cloud computing. A monopolization of centralized computing infrastructure would doubtlessly be used to put local computing out of reach for the working class. Imagine your phone or laptop lack the hardware to do anything on their own. Instead they require a network uplink and subscription to operate at all - opening up a new vector for rentiering, while simultaneously creating an information infrastructure chokepoint controlled by capital. With that strategic hold personal privacy would vanish, corporate censorship would flourish, and the vast majority of communication and information processing could be withheld at a capitalist’s whim.

The ethics of privacy

We are witnessing the emergence of a hereto impossible surveillance state, and the backswing of the imperial boomerang bringing ever more arms industry tech to bear at home. One of the primary government contract selling points for gen-AI has been in recognition and targeting software (which it should be noted also makes frequent mistakes resulting in the deaths of civilians, accountable to no one.) Like most other applications of gen-AI, Lavender target recognition and other similar programs are not innovative advancements, but flashy buzzword paintjobs to keep the billions of defence dollars flowing. Contrapuntally, Palantir and its ilk on the surveillance side are a crop of novel programs: software that leverages gen-AI’s unique data-sifting capacity to create massive agglomerated intelligence portfolios. Of course there are more benign versions of this, like those being used to enforce copyright law, but domestic surveillance is the most sinister and such the most worth discussion. Where previously individuals enjoyed some level of anonymity both online and in public - the invisibility of volume - without resistance and regulation gen-AI like Palantir threatens to make that camouflage a thing of the past. Decades of documents, millions of images, hours of footage, could all be scraped for incriminating details on any person in minutes. That power, moreover, would rest in the hands of a private contractor with full access to government records and intel. The implications are, needless to say, terrifying.

Neocolonial practices of sub employment and extraction are driving tech development. Heavily dependent on the mineral resources including copper, gold, cobalt, lithium, and silica, governments around the world are overturning environmental protections and indigenous land rights. The labour that gen-AI training datasets depend on, “data labelling” is primarily performed in sweat shops, refugee camps, and prisons. The combined global data labelling workforce is one of the largest single-task labour groups, and it exists in a completely atomized state. These workers have no rights or protections, even in the imperial core, and are overseen and assessed by unappealable algorithms. On top of the macro conditions, data labelling is a job no human being should have to do, on account of the psychological trauma workers are forced to endure (often for no compensation and with no support.) The job involves applying content tags to images and video so that language models can identify their components, and sorting out unsuitable content. That unsuitable content is snuff films, child sexual abuse material, and the worst humanity has to offer. None of this to even mention recent outcry over xAI producing and disseminating such material - a violation in its own right, and suggesting the model was trained on such materials.

Ethical considerations on data carry over to higher level technical problems like data flattening, blackboxing, and fairwashing. The lattermost two of these describes the process by which gen-AI decision making (in hiring for say or legal determinations) is unaccountable and inexplicable. Generative AI’s use blackbox, or nonlinear, reasoning which means their outputs can’t be cleanly traced or explained even by the engineers that build them. Thus by performing gestures of equality (like telling an AI agent to be impartial) institutions can fairwash outcomes which may nonetheless be colored by historical bias (gen-AI reflects it’s training data, and will almost always default to prioritizing white people and men unless otherwise instructed.) Data flattening is a term for gen-AI plagiarism, and the corporate ethos that facilitates it. Gen-AI developers regularly make court claims that data going into their models is immaterial, rather than the product of labour, because outputs can’t be directly correlated to an individual artist even if they would be impossible to produce without training on the artist’s work. In the creative sphere, generative AI gives capital access to the products of talent, while barring talent from ever accessing capital.

Philosophy

There are, in my opinion, more than sufficient reasons in this article already to call for the prohibition of, and to actively resist the development of generative AI, but the most compelling reason, the purely philosophical issue, I have saved for last. It is coincidentally the most simple. The use of gen-Ai is anti-intellectual, anti-academic, and anti-literacy. It is a “tool” which strips from its users not only the skills, but the desires to think critically, read for oneself, and articulate oneself. Using gen-AI for research atrophies one’s own ability to research. Using it to summarize hamstrings one’s own ability to process information. No technology which makes us stupider, and thus easier to manipulate and control, should ever be embraced by the working class - it’s why neo-fascists love gen-AI.

Any use of generative AI floats the cultural fiction being presented by the tech sector, contributing to the oncoming economic disaster. Directly via subscription, or indirectly via web traffic, both are metrics that developers are happy to use to keep the bubble open, and gouge as much public money as possible.

Are there limited use cases for the technology in a socialist society? Probably. Alphafold protein research, real time energy grid optimization. In the present material conditions however, the threat posed by the tech sector under the banner of AI merits principled opposition from all leftists. In early 2025, Socialist Action Canada passed a motion to assume by default the use of generative AI is forbidden in all party matters. AI is the modern industrial juggernaut, and it is driving us to ruin. Prohibition of its development and use until strict regulatory legislation can be passed, should be a core demand of the modern socialist movement.

Substantial knowledge work here worthy of a congratulatory intelligence award!

The work of communicating the pervasive threatening perils of the A.I. imperialism is crucial. We need to help people understand the vicious nature of the market which seeks to extract more rents, exert more authoritarian power, all the while refusing to resolve the essential needs of people and planet. A.I. is not our friend, and the mega-corporations in their fascistic power arrangement with governments must be brought under democratic socialist control fast.

Generative A.I. is a serious problem (as currently conceived and operationalized) and citizens should cooperate with each other to block and undermine its autocratic power and control.

I thought the recent speech by the Prime Minister of Spain and his five objectives to attempt to bring democracy and justice into the digital realm were worthy ideas. Here is the link - https://youtu.be/ZUW6zQ8b-vY?si=jwrQwcvnGi_cYdHG Sorry, you will have to copy and paste into your browser.